Michael Gonzalez

Robots will be able to do everything better than us…I am not sure exactly what to do about this. – Elon Musk

Note: Members of the Dividend Freedom Tribe got early access to this article.

Written by Sam Kovacs

Introduction

I’m sure by now you’ve heard of the ChatGPT craze.

They reached 1 million users.

I was one of the early ones and have been spending at least 2 hours a day interacting with the AI to learn how I can best leverage this novel technology.

Yesterday though, I went to log in to try out a few scenarios with ChatGPT, and I got hit with the following message:

A lightbulb went off.

Wait, they’re at capacity! That means they need to scale, to support mass demand and this mass creation of data.

The amount of text I’ve generated through ChatGPT in such a short time is mind numbing.

The amount of artwork I’ve generated using Midjourney is also staggering.

Author’s art. (Midjourney)

(This is the portrait of a character persona which I built in extreme detail using ChatGPT then got Midjourney to paint the portrait. Impressive, no?)

Here is the “aha” moment:

The needs of AI are going to require so much more computer processing and data than we can imagine. The growth is truly going to be exponential and secular.

There seems to be no way around this: Deploying large scale natural language processing AIs, is going to be the backbone of a new evolution of the web, which will produce an infinity more content than Web 2 ever did.

In experiments I’ve run, I have already built out entire fictional human character lives, with portraits of them from Midjourney, with their goals, and a hundred tweets to start the character’s twitter profile.

This does highlight the need for human verification online, but it also highlights the massive need for hardware and infrastructure.

Deploying Large Scale NLP AIs

I realized this, but I also knew that by no means was I an expert in the different moving parts involved in scaling a solution like ChatGPT, or its future variations.

I did what now already seems to be the natural thing to do: I asked ChatGPT.

Question: What is the key hardware infrastructure required at scale for ChatGPT to be used by billions?

This is what ChatGPT answered:

To deploy a model like ChatGPT at scale, you would need a significant amount of computational power and storage. One way to achieve this would be to use a cluster of powerful servers or cloud-based instances, such as those provided by Amazon Web Services (AMZN), Google Cloud Platform (GOOG), or Microsoft Azure (MSFT).

In terms of computational power, ChatGPT is a transformer-based model that uses attention mechanisms, which can require a lot of memory and processing power. For example, the original GPT-2 model, which was trained on a dataset of 40 GB of text data, was trained using several powerful GPUs. More recent and larger models like GPT-3, which was trained on 570 GB of text data and 175 billion parameters, would require even more computational resources.

To handle the storage needs, you would need a large distributed file system, such as HDFS or GPFS. This would allow you to store the data used to train the model, as well as the model itself, across multiple machines.

In addition to the computational and storage requirements, deploying a model like ChatGPT at scale also requires significant networking capabilities to handle the large amounts of data being sent between the various servers in the cluster. This can be achieved by using high-speed network interfaces and technologies such as InfiniBand or RDMA to minimize data transfer latency.

For future versions of NLP AI, we can expect the need of computational power and storage to continue to increase as the models become larger and more complex, as well as the need to incorporate more diverse data. The latest trend also focus on on-device AI or edge computing, therefore, the future models will require to be lightweight and can run on mobile devices or low-power edge devices with less computational power and storage but still achieving good results.

Quite compelling, don’t you think?

The writing is on the wall: we’re going to be needing more hardware and infrastructure.

So what are the various verticals and players in these industries?

The various players and verticals in the industries

Once again demonstrating its versatility as a tool, ChatGPT happily complies when asked to provide a list of publicly listed companies which operate in each of the verticals which produce the necessary hardware and associated services.

I even got it to put all the tickers in brackets which was a big win.

I now have the following list of companies.

Cloud providers:

- Amazon Web Services (AMZN)

- Google Cloud Platform (GOOG) (GOOGL)

- Microsoft Azure (MSFT)

- Alibaba Cloud (BABA)

- IBM Cloud (IBM)

Graphics Processing Unit manufacturers:

- NVIDIA (NVDA)

- AMD (AMD)

- Intel (INTC)

- Qualcomm (QCOM)

- Google does not have standalone stock, it is a subsidiary of Alphabet

Central Processing Unit manufacturers:

Data Center and Network Equipment Providers:

- Dell Technologies (DELL)

- IBM

- Cisco Systems (CSCO)

- Juniper Networks (JNPR)

- Huawei Technologies (private company)

- Arista Networks (ANET)

- Hewlett Packard Enterprise (HPE)

Memory and Storage Solutions:

- Micron Technology (MU)

- Western Digital (WDC)

- Seagate Technology (STX)

- Toshiba Memory (private company)

- SK Hynix (private company)

- Pure Storage (PSTG)

Edge AI and IoT Hardware:

Bringing focus to the dividend payers

From these stocks these are the ones which pay a dividend:

Microsoft, IBM, NVIDIA, AMD, Intel, Cisco Systems, Hewlett Packard Enterprise, Micron Technology, Western Digital, Seagate Technology, Marvell Technology Group, Broadcom.

That’s still quite a neat list of 13 companies, enough to build up a watchlist.

Unfortunately, doing so reveals something we already knew:

The only stocks which are investable through our dividend freedom method are the following:

- Intel, IBM, & Broadcom: stocks which have been covered by the Dividend Freedom Tribe for a long time.

- Seagate.

Intel

I made the case for Intel in what I believe was our most popular article in 2022: The $1 Trillion Opportunity.

Intel is well-positioned to become the leading chip manufacturer in the United States due to a growing need for self-sufficiency in semiconductor production.

The majority of high-complexity chips are currently produced by Taiwan Semiconductor Manufacturing (TSM).

However, with tensions between China and Taiwan, the supply chain of semiconductors is at risk of being compromised.

The CHIPS for America Act aims to increase domestic production of semiconductors and other microelectronics in the US by providing $52.7 billion in subsidies over the next five years and a 25% investment tax credit for chip plants worth an estimated $24 billion over the next decade.

Intel is expected to be a major beneficiary of the CHIPS Act, with estimates of $10 billion to $15 billion worth of U.S. government subsidies over the next five years.

As the world becomes more multipolar, self-sufficiency is becoming increasingly important, especially in digital infrastructure. Intel’s case for becoming America’s premier foundry is therefore rather strong.

This will take a few years to play out, and it is not a done deal, but the trend towards de-globalization and polarizing powers in the world give Intel an incredible opportunity, as they are one of a handful of manufacturers worldwide which have the ability to produce the high tech silicon which are required to run advanced computer chips.

But when you consider that the market is pricing INTC at $29.6 which gives it a yield just shy of 5%, you know that you’re getting plenty of compensation for the risks.

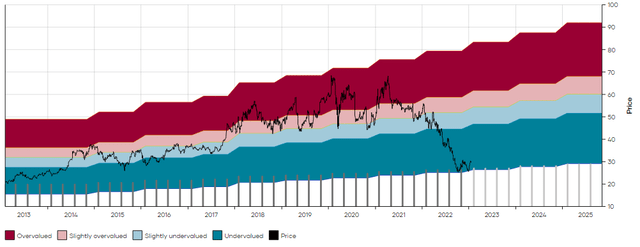

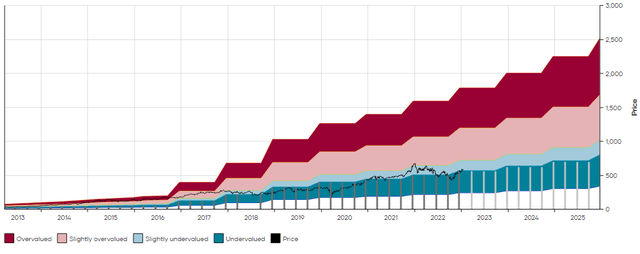

INTC MAD Chart (Dividend Freedom Tribe)

Dividend is safe as it still represents just 44% of operating cashflow.

It seems like a lay-up given the huge industry wide tailwinds over the upcoming decade.

IBM

IBM is one of the most interesting companies when it comes to the AI revolution:

1. They’ve been a pioneer in AI, investing in AI and its predecessor technologies for decades. Watson, their natural language processing platform, is the most well known and obvious solutions.

2. IBM still designs some of the best microprocessors for B2B applications.

3. With the RedHat acquisition, they are well positioned in the cloud business. Clients already use their cloud and hybrid cloud offerings for clients to run their AI workloads.

IBM’s turnaround and transition post spinoff is well underway, but we’re not totally out of the woods yet.

The dividend eats up 93% of free cashflow, which inhibits growth until the business grows.

In Q3, IBM recorded 15% revenue growth YoY, which is impressive and shows just how ready the company is to shed its reputation of a stagnant mega corporation.

More than half of IBM’s revenue is now recurring, which is exceptionally good.

IBM costs $145 and yields 4.55%.

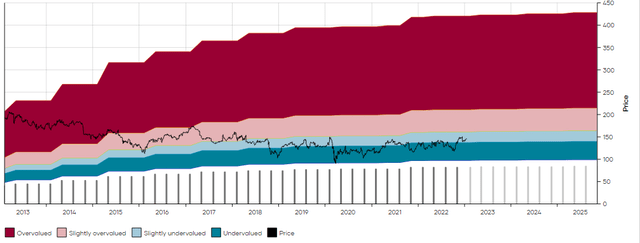

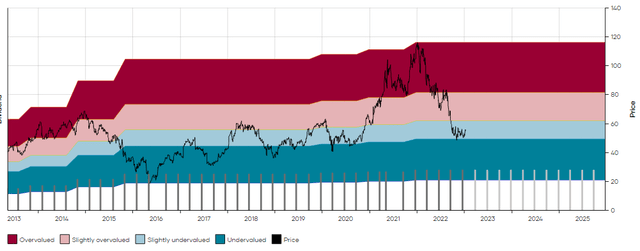

IBM MAD Chart (Dividend Freedom Tribe)

As you can see in the MAD Chart above, it is still a somewhat higher yield than the 10 year median yield of 4.1%.

But when you look at the past 5 years, the yield is actually below the median and entering overvalued territory.

IBM 5y MAD Chart (Dividend Freedom Tribe)

For this reason, IBM is slightly above the “buy below” target we share with our members and is a “hold” for now.

When IBM reports earnings for Q4 next week, we will review it with members of the Tribe and adjust our targets based on the results.

Broadcom

Broadcom is up 35% since we last wrote about the stock in October.

Part of me believes, until proven wrong, that October was the bottom of the bear market and the bottom of the recession. Let’s see.

AVGO reported record revenue in Q4 2022, with consolidated revenue reaching $33.2 billion, growing 21% year-on-year. Operating profits grew by 28% year-on-year and free cashflow per share by 25% YoY.

Growth was strong across all of Broadcom’s verticals:

- In the fiscal Q4 of 2022, the Semiconductor Solutions segment showed strong performance, with consolidated net revenue of $8.9 billion, up 21% year-on-year. Semiconductor solutions revenue increased 26% year-on-year to $7.1 billion, with the wireless segment representing 29% of this revenue.

- The Infrastructure Software segment also showed growth, with revenue of $1.8 billion, representing 21% of total revenue, and grew 4% year-on-year.

- The Broadband segment, which designs, develops, and supplies semiconductor solutions for various applications such as cable modems, set-top boxes and cable access gateways for the broadband communications market, also showed growth, with revenue of $1 billion growing 20% year-on-year.

AVGO expects its semiconductor revenue growth to sustain at approximately 20% YoY in the next quarter.

Over the weekend, I shared a short memo on Taiwan Semiconductor.

While TSM is a great company, as the manufacturer of a huge amount of chips for worldwide clients, growth has slowed and will slow for the first half of 23, as TSM is exposed to all verticals of the semiconductor industry.

We have argued for a while that Broadcom has exposure to the best verticals in the semiconductor industry, as its clients have massive tailwinds for decades to come, making growth more secular than cyclical. Of course there is some cyclicality, but less.

This means AVGO is continuing to grow aggressively, as the growth is structural rather than cyclical.

The stock yields 3.2% despite its 10 year dividend CAGR of 39%.

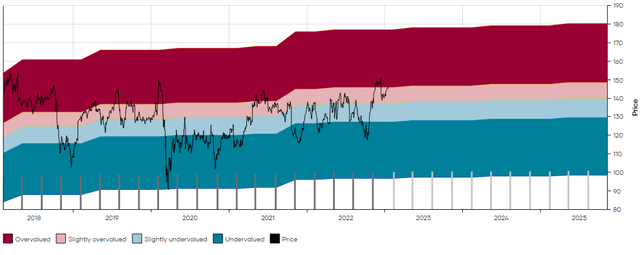

AVGO MAD Chart (Dividend Freedom Tribe)

Future growth will be lower, no doubt, but AVGO should still be able to maintain a 10-12% dividend CAGR for the upcoming decade.

This means the stock’s fair price should gravitate between 2% and 2.5%, which suggests a target of $740 to $920, up from the current price of $579.

There is the long tail risk of over exposure to Taiwan (and geopolitical risk surrounding), but most of the industry has this problem. In a few years it might be less of one, with European and American fabs offering diversification from Taiwan.

AVGO remains very attractively priced. The market is wrong about AVGO, and it is easy to buy, buy and buy, until you hit a full allocation for the position.

Seagate

The most recent commentary we got from management came at a UBS conference. Below were the key points.

- The major factor impacting demand in the short-term is the slowdown of the economy in China, specifically due to lockdowns and the ongoing COVID-19 situation.

- Another important factor is the accumulation of US cloud inventory, as companies have been buying more for safety stock to avoid supply chain disruptions.

- Inflation and the war in Ukraine also contribute to lower consumer demand.

- The company is confident in the long-term growth of the business, driven by new applications such as artificial intelligence, machine learning, autonomous driving, internet of things and smart cities.

- The recovery is difficult to predict, but the company is trending in line with what was discussed at the earnings release.

- Possible good signs from China in terms of how they managed COVID and lockdowns may be positive for the company.

- The company is trying to help customers reduce inventory and return to a more normalized business model in the next few months.

- Gross margin is expected to recover to previous levels once revenue levels return to normal.

So you clearly get an example of a business which is still having to deal with the punches from short term cyclicality, but knows that the tailwinds are so massive, that it will see it through and attempt to best position itself to be successful.

The stock currently yields 5.02%.

STX MAD Chart (Dividend Freedom Tribe)

Management has lacked consistency in increasing the dividend, but hasn’t resorted to cutting it since the Great Financial Crisis of 2009.

It has healthy payout ratios, and is a good income bet on dividend growth ultimately happening, and the company growing from industry tailwinds.

It looks like a great buy here.

Conclusions

The A.I. revolution is here, and it has finally come out with some killer use cases which can reach billions of users: chat is a phenomenal development.

It’s only going to improve from here. Working with NLP AIs will take new forms, and this will require an ever increasing supply of data.

This data will be backed by the companies which are already well established, who can jump onto the opportunities to grow massively within their existing industry.

AVGO, INTC, and STX are all great plays. IBM is as well, although only at a better price.

Be the first to comment